Why PDF/A-b Fails Machine Reading

ArticleMarch 12, 2026

ArticleMarch 12, 2026

About Benson Hendall, Softflakes

There is a specific class of document failure that is uniquely treacherous because it is entirely invisible. You open the file. The text renders perfectly. A human reader sees exactly what was intended. But ask a machine to extract that text—a search indexer, a RAG pipeline, a language model—and what comes back is garbage. Or nothing at all.

This isn't a bug in the extraction software. It is a direct consequence of what the PDF/A-b standard actually guarantees, and, crucially, what it deliberately ignores.

Glyphs Are Not Characters

To understand why this happens, we have to look at how PDF architecture handles text. It is less intuitive than most people assume. When a PDF renderer displays text on your screen, it isn't drawing letters. It is drawing glyphs—the visual shapes associated with characters in a specific font program.

A standard PDF stores text as a sequence of these glyph identifiers. The renderer maps them to drawing instructions, and you see readable words. But structurally? The file is just holding a list of shape references.

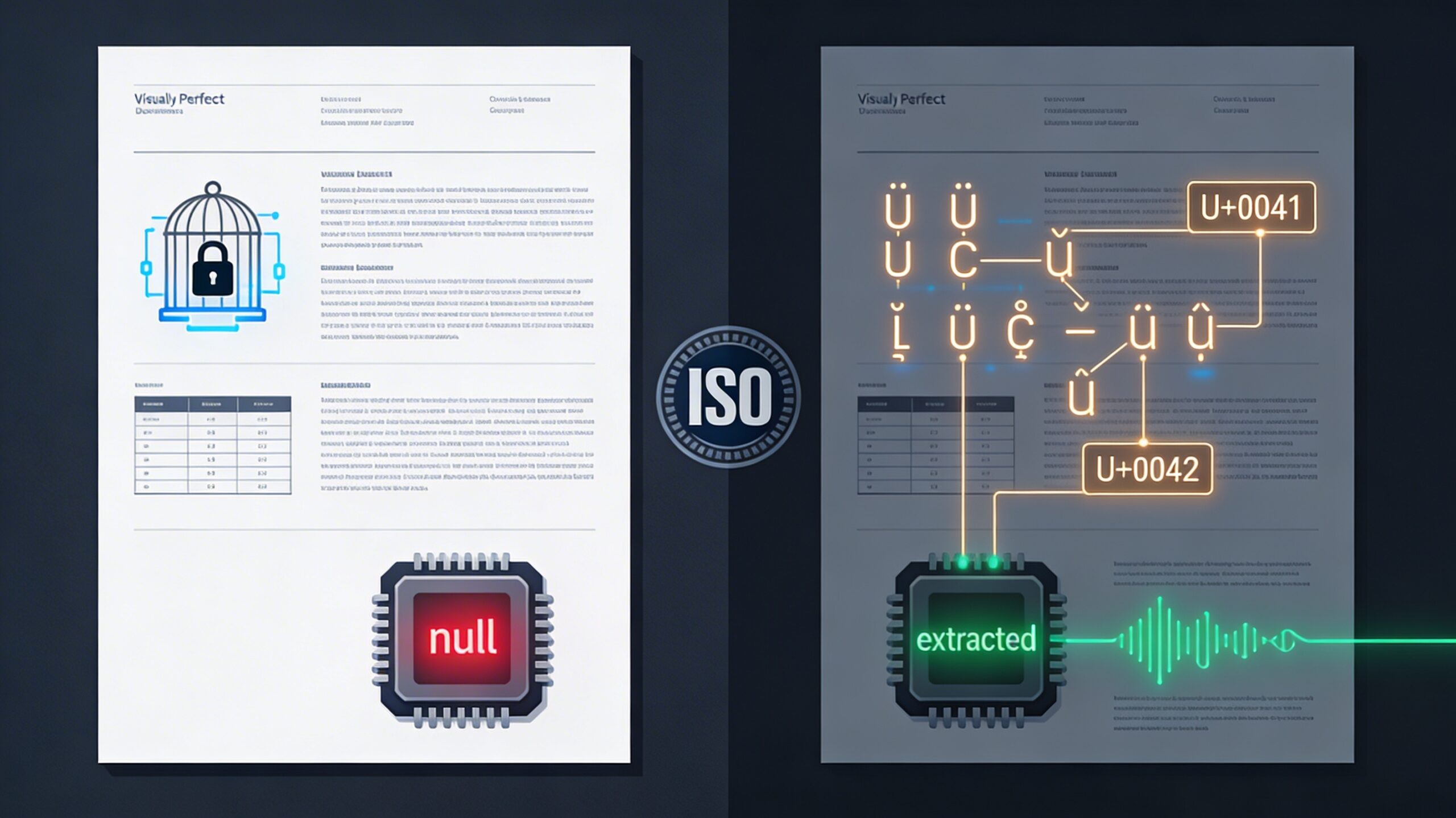

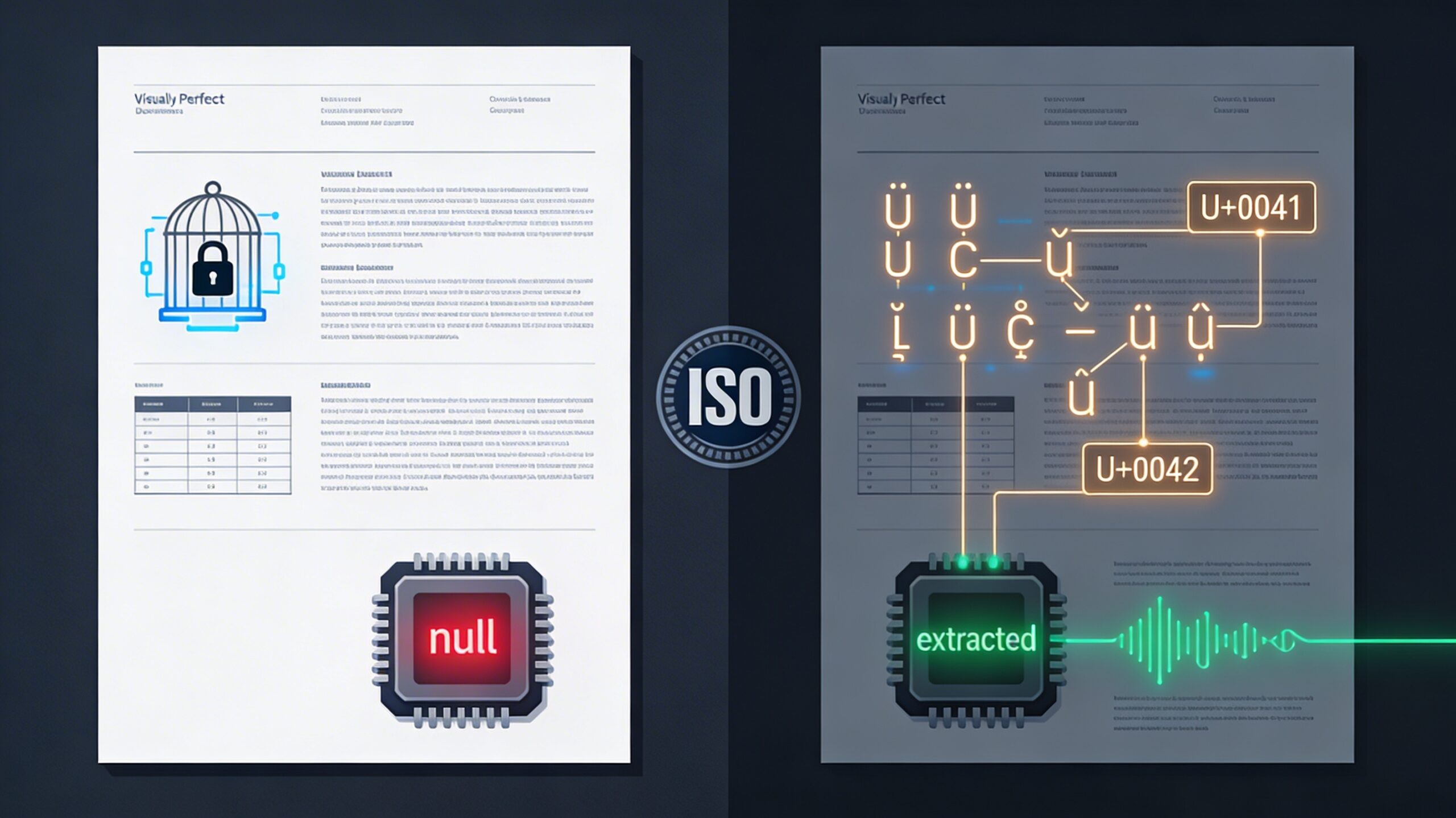

To make that text searchable or extractable, you need a separate data structure: a ToUnicode CMap. This mapping table explicitly records which Unicode code point belongs to which glyph. Without it, extraction software is flying blind. It knows a shape was drawn on the page, but it has no structural idea what that shape actually means. This gap—between rendering a shape and encoding a character—is where PDF/A-b archives quietly fall apart.

What PDF/A-b Actually Promises

PDF/A-b (where the "b" stands for Basic) is defined under ISO 19005 to guarantee one thing: reliable visual reproduction. Its sole commitment is that a document will look exactly the same decades from now as it does today, regardless of the operating system or software used to open it.

To achieve this, PDF/A-b mandates that all fonts be fully embedded within the file. No external references, no risky runtime font substitutions. The visual output is permanently locked in.

However, PDF/A-b does not require those embedded fonts to carry a ToUnicode CMap. The standard mandates visual fidelity, not machine readability. For its original, historical purpose—preserving a visual record—this was a perfectly coherent trade-off. But for modern enterprises trying to feed their archives into AI models, it is a massive structural blind spot.

Where Extraction Breaks

When text extraction libraries (like PyMuPDF, Apache PDFBox, or the parsers buried inside enterprise content management systems) attempt to read a PDF, they look for the ToUnicode CMap first. If it is missing, they fall back to heuristics: guessing via standard encoding tables, glyph name lookups, or internal font metrics.

These fallbacks might survive a simple Latin text using standard system fonts. They fail completely against the reality of enterprise archives: documents with custom subset-embedded fonts, legacy files from older authoring tools, or scanned materials processed through basic OCR engines that never enforced Unicode normalisation.

The failure modes are notoriously messy. Sometimes the parser returns an empty string. Sometimes it spits out replacement characters. But the most dangerous failure mode is when it returns text that looks plausible but is fundamentally wrong—characters misidentified because a glyph index happened to align with a different code point in the fallback table. The downstream system accepts it as fact, because no error was ever thrown.

The RAG Pipeline Hallucination

This brings us to the modern AI stack. A Retrieval-Augmented Generation (RAG) system ingests documents by converting their text into vector embeddings. The quality of that embedding is entirely held hostage by the quality of the extracted text.

If you feed a PDF/A-b archive without Unicode mappings into a RAG pipeline, you get one of three outcomes. The document becomes functionally invisible (an empty embedding). It returns nonsensical results (a corrupted embedding). Or, worst of all, it generates a plausible-but-incorrect embedding built on silently misidentified characters.

The phrase "garbage in, garbage out" is too generous here. Garbage implies the system knows the data is bad. The failure mode here is confident wrongness—a language model retrieving and synthesizing corrupted text without a single warning flag that the source encoding was compromised.

The PDF/A-2u Mandate

ISO 19005 addressed this exact gap with the introduction of the "u" (Unicode) conformance level. PDF/A-2u enforces all the strict visual fidelity rules of Level b, but adds a non-negotiable condition: every font must include a ToUnicode CMap that correctly maps all glyphs to their Unicode equivalents.

Visually, a Level b and a Level u file are indistinguishable. Structurally, they exist in different eras. A PDF/A-2u file can be ingested by any standards-compliant extraction library without heuristics, without guessing, and without silent data corruption.

This is why specifying "PDF/A compliance" in a procurement document or data governance policy is essentially meaningless unless you specify the conformance level. If your archive is ever going to be queried by software—whether for legal eDiscovery, search indexing, or LLM ingestion—Level b is insufficient. The standard isn't broken; we just stopped using it exclusively for human eyes.